7 Customer Analytics Solutions: Building a Stack that Doesn’t Lie to You

Meta: Enterprise dashboards display inaccurate data when pipelines break. We’ve just the right 7 customer analytics solutions that move beyond just trendy add-ons.

Enterprise leaders face a specific operational problem in 2026-

They purchase expensive analytics software suites. They integrate these suites into their company infrastructure. Yet, their employees still make decisions using outdated information. The software fails to deliver direct business value.

The issue lies in how businesses move and process data, especially when customer data platforms are not properly leveraged to unify and activate that data.

Legacy platforms force companies to duplicate their data, which directly impacts how effectively organizations can use data analytics to improve customer experience. They require manual data tagging. They fail to alert engineers when data pipelines break. These system limitations cause delays in product releases, missed opportunities, and inaccurate reporting.

To solve these exact problems, companies must adopt and adapt.

You don’t need ‘big’ names clogging your tech stack. You need tools that know data in and out. That means how to:

- Route data efficiently

- Monitor database health automatically

- categorize user feedback without human intervention

We examined the current enterprise software market- and identified seven specific tools that help tackle the precise technical and operational pain points enterprise leaders face today.

1. Hightouch

The Pain Point It Tackles

High-quality customer data is often stored in centralized cloud data warehouses

. But SDRs and support agents aren’t logging into data warehouses- they work within operational applications such as Salesforce, HubSpot, or Zendesk.

That poses a conundrum: data warehouses don’t natively communicate with these applications. The consequence? Employees interact with customers using incomplete or outdated data.

Hightouch navigates this- ensuring you’ve the full picture.

The software solution offers Reverse ETL (Extract, Transform, Load) software. It extracts data from the warehouse and writes it directly into business applications.

How It Delivers Value

A data engineer writes a standard SQL query within the Hightouch interface. And they then instruct Hightouch to run this query against the company data warehouse on a specific schedule.

The engineer then maps the output columns of that query to custom fields inside the company CRM.

Hightouch synchronizes the data automatically.

An SDR opens an account record in Salesforce and immediately sees the exact product usage metrics updated from the database ten minutes prior. The sales team identifies upsell opportunities based on actual software usage.

The result: The company increases revenue without asking the data team to build another dashboard, improving overall customer acquisition efficiency.

2. Clootrack

The Pain Point It Tackles

Enterprise companies receive thousands of support tickets and chat transcripts every single day. But it can be grueling for data science teams. They spend weeks creating manual keyword lists to categorize this text.

This manual process limits analysis substantially to ‘known’ problems, restricting deeper insights into the voice of the customer. The analytics tool ignores the complaint because the analysts have not yet created a specific tag for it if customers complain about a brand-new software bug.

That’s where Clootrack comes in.

Clootrack analyzes unstructured text data using unsupervised ML. It eliminates the need for manual keyword tagging.

How It Delivers Value

Users connect their Zendesk account, App Store developer account, or CRM directly to Clootrack. The software ingests the raw text. It automatically processes the sentences and groups similar phrases.

It creates categories based purely on word frequency and contextual meaning without any human input.

Product managers view a dashboard- a new cluster of complaints concerning a specific billing error. They see this error immediately. They don’t wait for a data analyst to discover the trend manually. The engineering team patches the billing error the same day.

The result: The company prevents further customer churn and reduces inbound support ticket volume.

3. Monte Carlo Data

The Pain Point It Tackles

Data infrastructure breaks frequently.

Software engineers change API endpoints. Third-party vendors alter their data formats. Tables fail to update during scheduled nightly loads. Executives perceive dashboards showing zero sales for a specific region and make incorrect strategic choices because they trust them.

Overall, they lose confidence in the internal data team.

Monte Carlo helps instill that confidence.

It provides data observability software. And helps monitor the entire data infrastructure for anomalies, alerting engineers before business users can notice the errors.

How It Delivers Value

The solution seamlessly connects to data warehouses and BI tools. It scans historical data and establishes baseline metrics for data volume and schema structure.

Monte Carlo detects the anomaly immediately if a daily data load drops from ten thousand rows to zero. It sends an automated alert directly to the engineering team via Slack or PagerDuty.

Data engineers then pause the data pipeline, fix the broken connection, and backfill the missing data.

The result: Business users log in the next morning and view completely accurate reports. Executives trust the numbers they see and make capital allocation decisions based on them.

4. Zepic

The Pain Point It Tackles

Modern web browsers block third-party tracking cookies.

Mobile operating systems restrict cross-application tracking. Traditional web analytics software cannot track users accurately across multiple domains anymore.

Companies lose visibility into their customer acquisition costs and conversion paths, making it difficult to accurately measure customer acquisition costs. They spend marketing budgets blindly.

But Zepic hopes to negate that.

Zepic manages first-party data collection and identity resolution. It tracks users through direct interactions rather than relying on browser cookies.

How It Delivers Value

The platform unifies user identities using deterministic data points. It uses email addresses, phone numbers, and account IDs to track customers.

Zepic monitors customer interactions across direct messaging applications, custom mobile apps, and conversational AI interfaces.

Marketing teams use Zepic to build audience segments based entirely on explicit customer interactions, aligning closely with modern B2B SaaS customer segmentation strategies. They trigger personalized marketing campaigns across email and SMS. These accurately attribute revenue to specific marketing campaigns without relying on deprecated tracking tech.

The result: The company reduces wasted ad spend and improves the return on investment for direct marketing.

5. Enterpret

The Pain Point It Tackles

Engineering teams receive feature requests from multiple departments simultaneously:

- The sales team wants new features to close enterprise deals.

- The support team wants bug fixes to reduce ticket volume.

- The product team wants to build entirely new modules.

Leaders struggle to prioritize development work based on actual revenue impact, often due to a lack of clearly defined ideal customer profiles. They guess which features matter most.

Enterpret helps bring the focus back to what truly matters.

Enterpret links qualitative customer feedback directly to quantitative business metrics and product development workflows.

How It Delivers Value

The software ingests data from CRM systems, survey tools, and support software. It uses natural language processing to extract specific feature requests from the text. It then links these requests to specific Jira tickets and Salesforce opportunity values.

A product leader views an Enterpret dashboard.

They see exactly how much potential pipeline revenue depends on building a specific API integration. They also see how many current enterprise customers requested the same integration.

The product leader then allocates engineering resources based entirely on this financial data.

The result: The company ships features that directly secure new revenue and retain existing high-value accounts.

6. Amplitude

The Pain Point It Tackles

Product managers use one software tool to view user drop-off rates, often missing a unified view provided by customer journey analytics. They use a completely different software tool to launch an A/B test to fix that drop-off.

This workflow requires complex integrations between two separate vendors. The disconnect delays product improvements and introduces data discrepancies between the two systems.

Amplitude combines product event tracking, feature flagging, and experimentation capabilities within a single platform. That is its strongest differentiator.

How It Delivers Value

The software records every action a user takes within a web or mobile application. A product manager creates a funnel report. They identify a checkout screen where fifty percent of users close the application.

They use the same Amplitude interface to create a design variant of that checkout screen. They deploy the variant to ten percent of active users.

Teams measure the exact impact of the new design on user retention without switching apps. They determine the winning design and roll it out to all users globally.

The result: The company steadily accelerates product development cycles and increases conversion rates.

7. Medallia

The Pain Point It Tackles

Customers routinely ignore text-based surveys, especially in an era where digital fatigue is engulfing customer attention spans. Companies achieve very low response rates on email questionnaires. Furthermore, text analysis completely misses tone and urgency.

Companies miss the critical context of angry phone calls or frustrated facial expressions during user testing sessions, limiting their understanding of the complete customer journey. They fail to understand the actual customer experience.

But Medallia ensures you don’t miss out.

Medallia accurately processes voice recordings and video interactions to evaluate customer satisfaction.

How It Delivers Value

The platform ingests audio files directly from enterprise call centers. It transcribes the audio into text and analyzes the acoustic properties of the speaker’s voice to measure stress, anger, or hesitation- along with user-submitted video recordings to track visual cues.

Customer success managers configure Medallia to trigger automated alerts based on acoustic stress levels.

An enterprise client expresses extreme frustration on a routine support call. Medallia detects the vocal stress and notifies the account director immediately. The account director calls the client, resolves the underlying issue, and prevents a major contract cancellation.

The result: The company retains high-value clients by identifying friction points that traditional text surveys miss entirely.

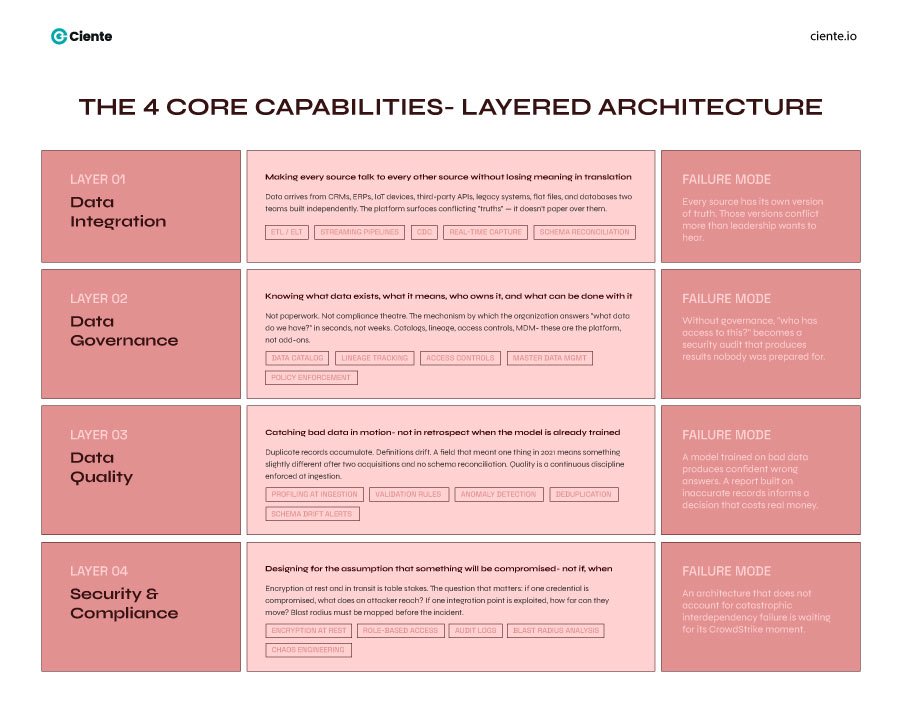

The Component-Based Architecture

The enterprise software market demands modular architecture, similar to how modern marketing automation strategies balance efficiency and personalization.

Industry leaders no longer buy single-platform solutions to handle all analytics tasks. They built a central data warehouse. And they purchase specialized software to handle specific data routing, monitoring, and analysis tasks.

This approach prevents vendor lock-in. It helps companies swap out individual tools when better tech emerges.

Executives who adopt this direct, component-based approach resolve their operational bottlenecks.

They ensure their data pipelines function correctly. They distribute accurate data to their operational teams. And They analyze customer feedback efficiently and allocate resources based on factual business metrics, strengthening the overall customer value proposition.

These are the pros that truly impactful customer analytics solutions bring to the table. The ball is in your court- would you rather count impressions as the market moves on, or be present in moments that truly matter to your customers?