Answer Engine Optimization vs SEO: A Recursion in Marketing

Everyone is treating AEO like a new discipline that replaces SEO. It does not. It is the same discipline with a sharper mandate. The brands that understand this will win. The ones chasing the new acronym without the foundation will not.

A new term enters the marketing conversation.

Decks get updated. Agencies rebrand their service pages. LinkedIn fills up with takes about how SEO is dead and AEO is the future and if you are not optimizing for answer engines right now you are already behind.

And somewhere in the middle of all of that noise, the actual idea gets lost. AEO is not a revolution. It is a recursion. The same loop, running again, with a slightly different interface on top.

Let us actually talk about what is happening here.

What AEO and SEO Are Actually Doing

The Definition Everyone Agrees On and the Conclusion They Get Wrong

SEO: optimize your content so search engines can find it, index it, rank it, and surface it to people looking for something relevant.

AEO: optimize your content so AI systems can find it, extract it, trust it, and surface it as a direct answer to a specific query.

There are clearly some similarities here.

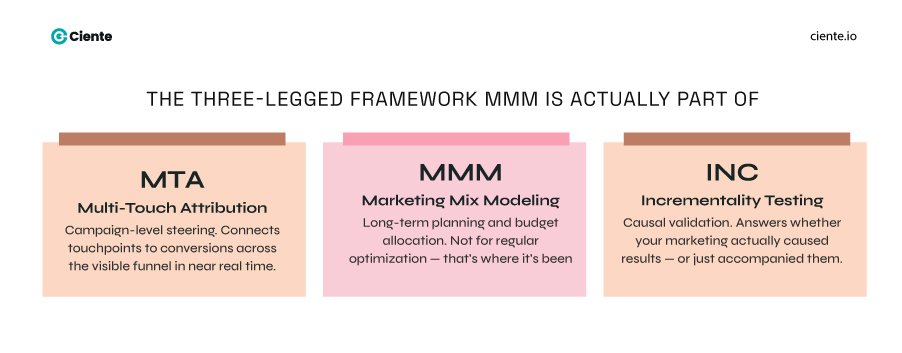

The underlying requirement in both is identical. You need content that is clear, structured, authoritative, and genuinely useful to the person asking the question. The distribution layer changed. The crawlers are smarter. The interface looks different. But the job is the same job.

What the industry keeps getting wrong is treating AEO as a departure from SEO rather than a continuation of it. The blogs will tell you SEO is for rankings and AEO is for answers, and they are two separate strategies requiring two separate teams with two separate approaches.

That framing is wrong. And it is costing people real money, especially when marketing investments are already under pressure to show measurable ROI.

The Underlying Structure Has Not Changed

Go back to basics for a moment.

What does Google’s algorithm fundamentally reward? Content that demonstrates expertise, authority, and trustworthiness on a specific topic, structured clearly enough that a bot can understand what it means, distributed across a domain that has earned credibility over time.

What does an LLM reward when deciding what to cite? Content that demonstrates expertise, authority, and trustworthiness on a specific topic, structured clearly enough that the model can extract a reliable answer, sourced from a domain it has learned to treat as credible.

The words are almost identical. Because the logic is almost identical.

Yes, there are technical differences. Schema markup matters more for AEO. Conversational phrasing matters more for AEO. Concise answer blocks above the fold matter more for AEO. These are real differences at the execution level.

But they are not differences in the underlying structure. They are refinements of it. Tactical adjustments built on the same strategic foundation.

If your SEO is weak, your AEO will fail, just like any SaaS growth effort built without a strong foundational playbook. Not because AEO builds on SEO as a metaphor. Because literally, most AI systems use search indexes to find their sources. You cannot be cited if you cannot be found. You cannot be found if your SEO is broken.

SEO is the base. AEO is what happens at the top of a well-built structure.

The Buyer Behavior Underneath AEO vs SEO

Long-Tail Queries Are Not New. The Volume Is.

Here is what has actually changed.

Buyers have always had specific questions. Before AI, they typed fragments of those questions into Google and hoped the results would help them piece together an answer. The query was short because the search box rewarded brevity.

Now the query is the question. The full question, reflecting deeper buyer intent signals that modern SaaS marketing teams need to decode. The way they would actually say it to a knowledgeable colleague.

What is the best workflow management tool for a marketing team of four people who are already using HubSpot?

That is not a new need. It is a new way of expressing an old need. And the specificity of the expression is the important part.

Because that query contains everything a good marketer needs to know about the buyer. Team size. Existing stack. Function. The fact that they are evaluating, not just researching. The fact that they care about integration, not just features.

Your Sales Conversations Already Have the Answers

Your Sales Conversations Already Have the Answers, especially when aligned with structured approaches like lead scoring and buyer qualification. This is where the real opportunity lives, and almost nobody is pointing at it clearly.

The long-tail conversational queries your buyers are feeding into AI systems are not mysterious. They are predictable. Because the questions buyers ask AI are the same questions they ask your sales team.

What does your SDR hear in the first five minutes of every discovery call? What objections come up at the evaluation stage every single time? What does the champion ask before they go back to get internal buy-in?

Those questions are the queries. Not exact matches. But the intent, the language, the specific anxiety behind the words, that is all there in your CRM if you have been capturing it.

Map your sales conversation data against your content, just as you would when building an effective account-based marketing strategy. Find the questions that come up repeatedly with no strong answer in your content library. Build the answer. Structure it clearly. Publish it as a piece of content that actually helps the person asking.

That is AEO. And it is also SEO. Because a question that your buyers ask your sales team is a question that other buyers are typing into Google and now into ChatGPT and Perplexity as well.

The content that answers it well gets found in both places.

Brands Are Measuring the Wrong Thing

Brands Are Measuring the Wrong Thing, often relying too heavily on traditional performance marketing metrics. Here is where SaaS marketing teams are getting stuck.

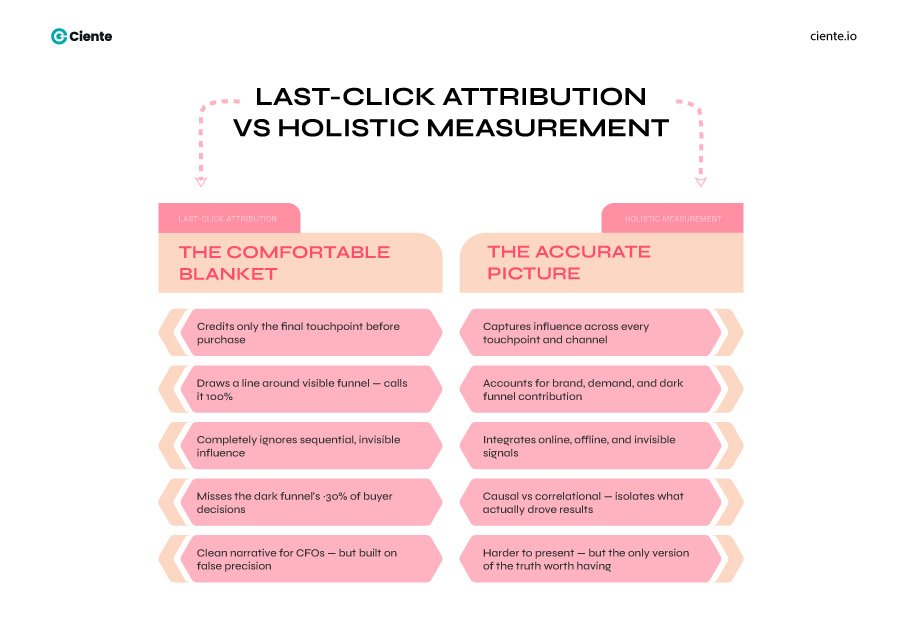

AEO does not produce the same measurement trail that SEO does. Clicks, sessions, time on page, conversion events. The classic attribution model.

When an AI system cites your content and a buyer reads the answer and forms a preference for your brand without ever visiting your website, that does not show up anywhere in your GA4 dashboard. The contribution is real. The measurement is invisible.

So what happens? Marketing teams run one quarter of AEO-adjacent content, see no movement in the metrics they report to leadership, and quietly deprioritize it. The investment stops before the compounding starts.

This is the ROI problem, and it becomes even more complex when benchmark expectations are misaligned. Not that AEO does not produce returns. That the return does not fit inside the frameworks organizations have built to measure it.

What You Should Actually Be Tracking

The honest answer is that the right measurement for AEO-focused content is the same measurement that has always been right for trust-building content.

Are the right people coming in already understanding who you are and what you do? Are sales cycles shorter for prospects who found you through content versus cold outreach? Are deals closing faster because the buyer arrived pre-educated?

These are pipeline quality signals. Not click signals, and they tie directly into broader SaaS marketing challenges teams are trying to solve. And they require qualitative input from sales alongside the quantitative input from your analytics.

Ask your sales team directly. Are you getting prospects who already understand the problem well and are evaluating you seriously from the first call? More of those means the content is working. Whether they came from a Google result or a Perplexity citation is almost irrelevant to the business outcome.

The channel changed. The buyer behavior it produces has not.

Where AEO Is Actually a Real Differentiator

The One Thing That Is Genuinely Different

Not everything about this conversation is recursive.

There is one thing AEO introduces that traditional SEO never quite demanded. And it is worth being honest about.

Precision.

SEO has always rewarded comprehensiveness. Cover the topic thoroughly. Build the pillar page. Create the cluster of supporting content. Show the search engine that you are the authority on everything in this domain.

AEO rewards the opposite, much like how product-market fit demands precision over volume in messaging. Answer one question so clearly and completely that an AI system would be confident citing it as the definitive response to that specific query.

Not the comprehensive page. The precise answer. Specific enough to be unambiguous. Clear enough to be extracted without surrounding context. Trustworthy enough to be cited when someone asks a question with real stakes attached to it.

This is a different kind of content discipline, similar to how modern SaaS teams are evolving their strategies with AI-driven marketing approaches. It requires knowing your buyer’s specific questions at a level of detail that most content strategies never go to. It requires writing for the moment of need rather than for the category broadly.

And because it is harder, most brands are not doing it well. Which means the ones who do it well have a real advantage.

The Specific Query Is the Competitive Moat

The specific query is the competitive moat, especially in increasingly competitive SaaS markets. Think about what it means to be the brand that answers a very specific question for a very specific buyer in a very specific moment.

A founder searching for workflow tools for a small marketing team already using HubSpot is not in early awareness. They are close to a decision. The AI system that answers their question is not just providing information. It is shaping the shortlist. It is influencing which vendor they research next.

If your content is the answer, your brand is in the conversation before your sales team ever gets a call. That is not an SEO win. It is a trust win. And it starts with knowing the question well enough to answer it before it is asked.

That knowledge comes from your sales data. Your customer interviews. Your churn conversations. The questions in your support tickets. The objections in your lost deal analysis.

Not from keyword tools. From your buyers, just like the insights behind successful SaaS marketing campaigns.

AEO vs SEO, the topic itself is the disconnect between buyers and marketing teams

The industry will keep inventing new acronyms, just as it continues to evolve across different SaaS marketing channels.

AEO. GEO. AIO optimization. Whatever comes next. Each one will get a wave of content explaining why the previous approach is now obsolete, and this new framework is the thing everyone needs to urgently adopt.

And each time, the underlying logic will be the same.

Understand your buyer. Answer their actual questions. Build content that earns trust by being genuinely useful. Structure it so machines can read it. Distribute it on a domain that has earned credibility over time.

That is SEO. It is also AEO. It is also whatever the next acronym will be.

The interface has changed, but the underlying logic is still the same: solving buyer problems.

And the brands that are going to win in AI search are not the ones frantically optimizing for citations by hacking prompt patterns and stuffing schema. They are the ones who did the slow, deliberate work of understanding what their buyers actually need to know and building the clearest possible answer to it.