Content Marketing Vs Sales for Saas Growth: A Strategy That Asks the Wrong Question

The content vs. sales debate has been running for years. Here’s why the war itself is the wrong battle – and what SaaS organizations should be fighting for instead.

The debate has been running for years now. Sales teams think marketing is making noise. Marketing teams think sales is sabotaging their leads. Both factions are presenting their case like it’s 1847 and they’re arguing land borders.

Here’s the problem: the war itself is the problem.

Not the teams. Not the strategy. The framing.

The first touch is dead. Nobody told the playbooks.

Ask most SaaS organizations how they think about their growth, especially when defining their overall SaaS marketing strategy, and they’ll tell you one of two things: We’re content-led or We’re sales-led. and they’ll tell you one of two things: “We’re content-led” or “We’re sales-led.” Both are incomplete sentences pretending to be strategies, often ignoring deeper SaaS marketing challenges that create this divide.

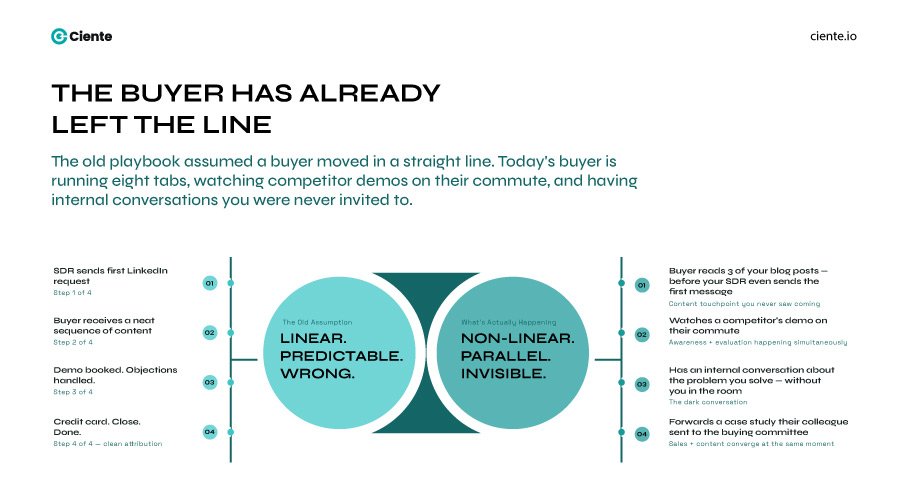

The premise behind choosing one is a relic – it assumes a buyer moves in a straight line. Enter through one door, receive information in a neat sequence, hand over a credit card, and close.

That’s not how anyone buys anymore, especially in a landscape shaped by evolving SaaS market trends.

Buyers are running eight tabs. They’ve already read three of your blog posts before your SDR sent the first LinkedIn request. They watched a competitor’s demo during a commute. They had an internal conversation about the problem you solved – one you weren’t invited to.

The buying journey is non-linear, a reality often overlooked when companies chase SaaS product-market fit in isolation. It has always been non-linear. The industry just didn’t have the data to prove it yet.

What’s changed is this: the buyer is in multiple stages at once. Awareness, consideration, and late-stage evaluation are happening simultaneously. And the moment you force them into one lane – content or sales – you lose them in the ones you abandoned.

The case for content-led growth, and why it’s incomplete

Here’s what the content evangelists get right.

Inbound works. A well-placed article that solves a real problem is the closest thing to a permanent asset in marketing. It compounds. It works at 2 am when your sales team is asleep, much like well-executed SaaS marketing campaigns that scale over time. It builds authority over time in a way a cold outreach sequence simply cannot.

And for SaaS companies in particular – where the buyer is often technical, skeptical, and deeply tired of vendor language – content that actually teaches something is the fastest way to disarm them. Trust before pitch, a principle reinforced across modern SaaS social media marketing efforts.

But here’s the part nobody says out loud.

Content without sales feedback is writing in a vacuum—similar to teams relying solely on disconnected SaaS marketing tools without real user insight.

Who tells the content team what questions buyers are actually asking—especially insights uncovered through account-based marketing for SaaS?

Sales do.

Without that input, content teams are optimizing for what they imagine the buyer wants. Sometimes they get it right. More often, they’re producing assets that are smart but off-frequency – like playing a concert in the right key but the wrong venue.

The pipeline dries out.

The case for sales-led growth, and why it’s also incomplete

Sales-led growth works, until it doesn’t, particularly when it operates without insights from SaaS performance marketing data.

The argument is seductive: shorter feedback loops, direct revenue attribution, and high control over the message. An outbound team with a good list and a clear ICP can move fast.

But there’s a cost.

The cost is attention, something already stretched thin across channels like SaaS email marketing.

Buyers are overwhelmed, especially in ecosystems shaped by aggressive SaaS affiliate marketing and outreach loops. Inbox fatigue is real. The average enterprise buyer receives enough outreach in a week to fill an inbox for a month. And the threshold for “this is worth my time” keeps rising – because buyers know what an SDR sequence looks like; they’ve been through seventeen of them this quarter.

If there’s nothing to point to, no authority, no proof, no insight like what you’d gain from analyzing competitor SaaS marketing strategies, the conversation stalls.

Your rep has ten minutes of credibility before the prospect decides whether to engage or file the conversation into the void. If there’s nothing to point to – no authoritative content, no thought leadership, no signal that this company has something worth saying beyond their own features – the conversation stalls on price and proof, and you’re competing on margin.

The inbound pipeline doesn’t refill on its own without consistent investment guided by SaaS marketing budgets.

The dichotomy nobody should want

Here’s what the versus war actually costs touchpoints that are often benchmarked in SaaS marketing benchmarks.

A buyer’s journey might have twelve meaningful interactions, including signals from SaaS referral marketing loops. Some of those are content. Some are sales. Some are both at the same time – a rep sends a relevant article mid-conversation, and the buyer forwards it to their buying committee.

If an organization chooses one channel and atrophies the other, they lose entire segments of that twelve-step path. And because buying is non-linear, the gaps don’t show up cleanly in the data. The pipeline looks fine, until it doesn’t—often due to overlooked mistakes in outsourcing SaaS marketing. And by the time it doesn’t, the organization has normalized the leak.

The real problem isn’t content versus sales.

It’s the misalignment between them. And that misalignment is structural, not personal.

What alignment actually looks like

It doesn’t look like one meeting a month where the teams compare numbers.

It looks like content teams are sitting in on discovery calls, an approach critical for scaling SaaS startup marketing. It looks like sales reps are sharing verbatim objections, so content can turn them into assets. It looks like a shared understanding of what “qualified” means – not a metric passed over a wall, but a conversation.

Sales tells content what buyers are afraid of. Content turns that into something a buyer will read at 11 pm before the board meeting. Sales close on the trust that the content was built.

Neither works alone. Both are reduced without the other.

The first touch has never been the only touch. Every channel your buyer encounters, from content to outreach to pricing conversations shaped by SaaS marketing agency pricing models, is part of one experience. The moment you optimize one channel at the expense of the other, you introduce friction into that experience.

And friction, in a long B2B cycle, is just a slower version of losing the deal.

The question to stop asking

Stop asking: content or sales?

Start asking: where is the buyer, and what do they need from us right now?

Sometimes the answer is an article that solves a problem they didn’t know you understood. Sometimes it’s a rep who picks up the phone at exactly the right moment, especially in industries rapidly adopting SaaS, like those discussed in Why Manufacturers Are Switching to SaaS.

That’s fine. The buyer doesn’t care who gets the credit.