Most B2B teams track SAO volume but ignore what happens after acceptance. Here’s why the sales accepted opportunity is your most gamed pipeline metric.

To understand whether a B2B revenue engine is actually healthy or quietly breaking down, skip the MQL count and look at what happens at the sales-accepted opportunity stage, alongside key pipeline health indicators like those outlined in sales pipeline metrics.

That handoff, the moment when a sales rep reviews a lead and decides it is worth pursuing, is where the optimism in pipeline forecasts meets the reality of buyer readiness.

And for most organizations, the gap between those two things is wider than either team wants to admit.

The sales accepted opportunity, or SAO, is a quality gate within a broader structured sales process that determines whether leads are truly ready for pipeline progression.

Marketing passes a qualified lead. Sales reviews it against an agreed set of criteria. If it meets the bar, it becomes an SAO and enters the pipeline as a real opportunity. In practice, it is one of the most gamed, most argued-over, and most inconsistently applied metrics in B2B go-to-market.

How that happens and what it costs- is worth understanding properly.

What a sales accepted opportunity is supposed to do

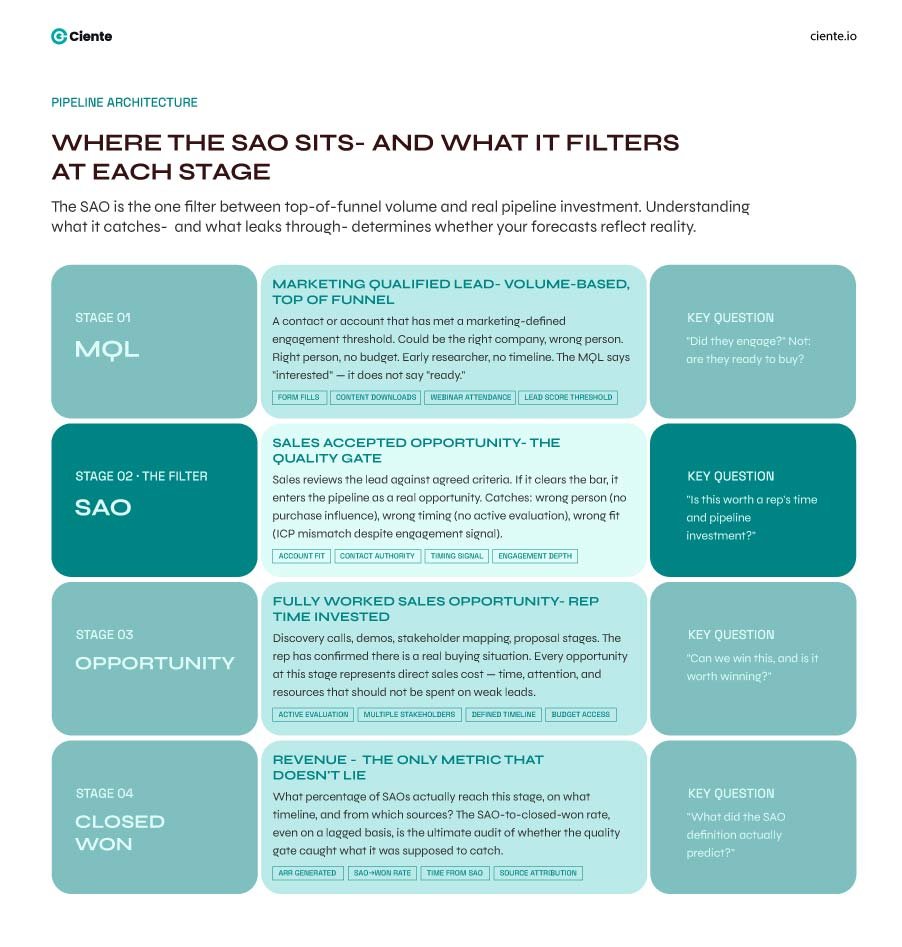

The SAO sits between MQL and a fully worked sales opportunity. Its job is to act as a filter, especially critical in the middle of the funnel, where lead quality often determines pipeline efficiency.

Not every MQL is sales-ready. Some are early-stage researchers. Some are the right company but the wrong person. Some fit the ICP on paper but have no active budget or timeline.

The SAO stage exists to catch all of that before a rep invests significant time.

When it works correctly, the SAO gives both teams a shared checkpoint.

Marketing knows their leads are being evaluated against a defined standard, not just an SDR’s gut. Sales knows they are not expected to work every lead that comes through, only those that clear the bar. And leadership has a metric that reflects real pipeline potential rather than top-of-funnel volume. That is the theory.

Getting there requires something most teams underestimate: a definition that both sides actually agree on and consistently apply, often supported by strong sales enablement practices.

The criteria problem

Most SAO definitions in the wild are either too loose or too rigid.

The loose version is some variation of: the rep reviewed it and decided to call. That tells you almost nothing about the quality of leads. A rep under quota pressure will work almost any lead that has a phone number attached. An SDR who had a bad week will reject leads that should move forward.

With loose criteria, the SAO number tracks rep psychology as much as it tracks lead quality.

The rigid version goes the other way.

Organizations that try to enforce strict BANT criteria at the SAO stage often struggle in modern selling environments shaped by digital sales transformation.

In long B2B sales cycles, buyers rarely have all four boxes checked before a first conversation. Requiring it means marketing either games the criteria to get leads through, or the SAO rate drops to a level that makes everyone nervous about demand-generation ROI.

The criteria that actually work sit in between and are often strengthened through data-driven sales analysis rather than rigid frameworks.

They are specific enough that two different reps reviewing the same lead would reach the same decision, but flexible enough to reflect how B2B buyers actually behave. That usually means defining minimum thresholds around fit, engagement signal, and some indication of relevant timing, without demanding full qualification before the first call.

Why the SAO number gets gamed, and what that hides

When marketing is measured on MQL volume and sales are measured on pipeline created, the SAO sits at an awkward intersection, highlighting the ongoing challenge of sales and marketing alignment. Marketing wants their leads accepted. Sales wants to protect their pipeline quality metrics.

Neither of those motivations, on its own, produces an honest SAO count.

On the marketing side, the game is lead inflation.

If the criteria are vague, there is pressure to push leads through that are directionally qualified rather than actually ready. A lead from a target account who downloaded a whitepaper might technically meet the engagement threshold, but if the downloading contact is a junior analyst with no purchase influence, accepting them as an SAO is wishful thinking dressed as pipeline.

On the sales side, the game works differently, often influenced by how reps manage outreach through structured sales cadence strategies.

Reps sometimes accept leads they have no intention of working seriously, because the act of accepting looks good on activity dashboards. The lead sits in the CRM as an open opportunity, ages past the follow-up window, and eventually gets closed as lost or disqualified without a real conversation ever happening.

Leadership sees an SAO rate that looks healthy.

The actual follow-through rate tells a different story.

A high SAO rate and a low contact rate in the same period are one of the clearest signs that the definition is not working, or nobody enforces it consistently.

The data you need to catch this early

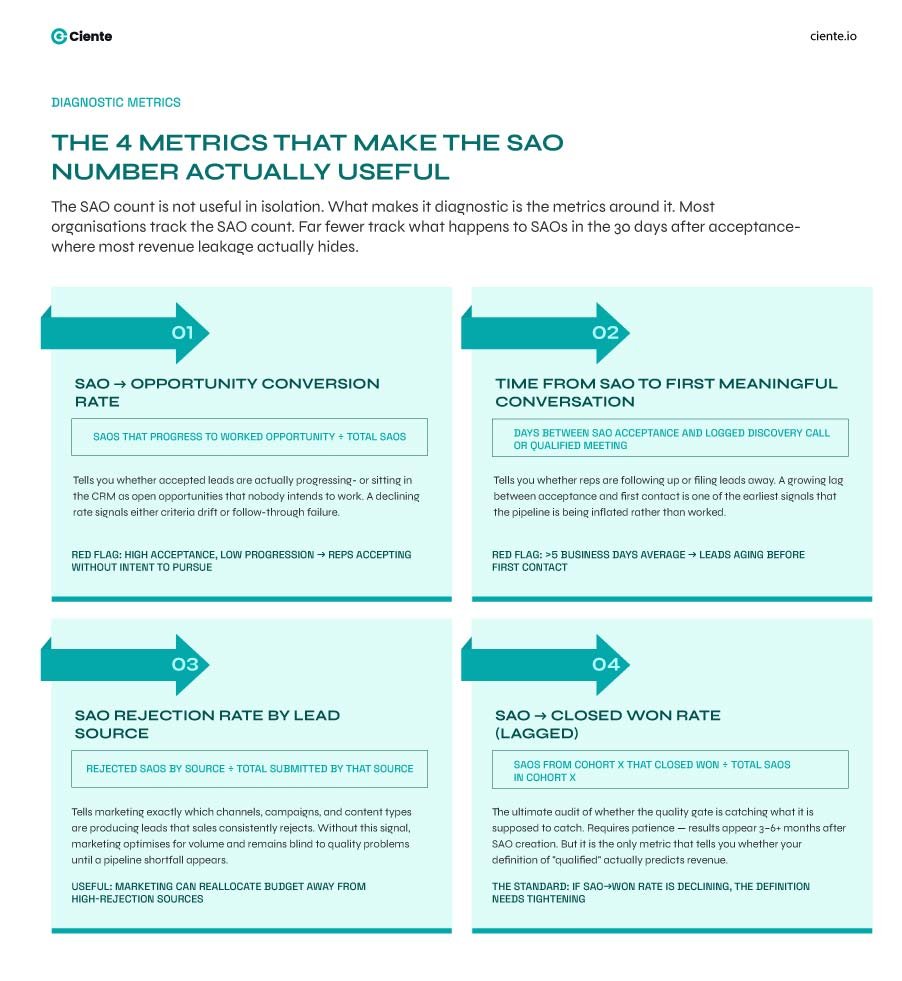

The SAO number isn’t useful in isolation and should always be evaluated alongside broader pipeline and conversion metrics.

What makes it diagnostic is the metrics around it. The SAO-to-opportunity conversion rate tells you whether accepted leads are actually progressing. The average time from SAO to the first meaningful conversation tells you whether reps are following up or filing leads away.

SAO-to-closed-won rate, even on a lagged basis, tells you whether the quality gate is catching what it is supposed to catch.

Most organizations track the SAO count. Far fewer track what happens to SAOs in the thirty days after acceptance. That gap is where a lot of revenue leakage hides, and where the real quality problem becomes visible if you are willing to look at it.

The conversation between marketing and sales that usually does not happen

SAO disputes are common, particularly in organizations without clearly defined sales collaboration and alignment frameworks.

Marketing says sales are rejecting qualified leads to avoid accountability for the pipeline. Sales says marketing is sending over contacts who have no buying intent and calling them qualified.

Both sides have real evidence for their position, which is usually a sign that the shared definition is never actually resolved; it’s just plastered over.

Getting to an honest SAO standard requires a specific kind of conversation that most revenue teams find uncomfortable.

It means marketing sitting with sales in deal reviews and aligning on what qualifies as a real opportunity, similar to how multi-threading in sales emphasizes deeper account engagement. and hearing, directly, why certain lead types do not convert. It means sales being specific about what good looks like rather than defaulting to broad rejections. And it means leadership being willing to hold the SAO rate flat or let it drop as the definition gets tightened, because chasing a higher number before the criteria are right produces a cleaner-looking version of the same problem.

The organizations that have worked through this land on a monthly calibration process. A sample of accepted and rejected leads gets reviewed jointly. Edge cases get discussed and used to refine the criteria.

Over time, the definition stops being a document that lives in a shared drive nobody reads and becomes something both teams actually derive reason from. That takes longer than buying a lead scoring tool, but it is what actually moves the number.

Where SAO quality connects to the broader revenue picture

Forecasting accuracy

In B2B sales depends heavily on pipeline quality, often supported by structured sales analytics and reporting practices.

Pipeline quality starts at the SAO stage.

If the leads entering the pipeline as accepted opportunities are inconsistently qualified, every forecast built downstream is working with flawed inputs. Sales leaders realize this, which is why experienced revenue leaders often apply their own informal discount rate to the pipeline generated by specific campaigns or lead sources.

The discount is a workaround for a definition problem that was never properly solved.

When the SAO definition is rigid and consistently applied, forecast accuracy improves because the pipeline is built from a more honest foundation.

Opportunities that enter during the SAO stage have cleared a real bar, which means conversion rate assumptions are grounded in something reliable rather than historical averages diluted by a weak pipeline.

Marketing’s ability to optimize

Marketing cannot improve lead quality without visibility into outcomes, which is where data analytics in sales plays a critical role. If the SAO feedback loop is broken, either because rejections are logged inconsistently or because accepted leads never get a disposition, marketing is optimizing in the dark.

They will fund the channels and content types that produce volume, because volume is what they can see, even if the quality of those channels’ production is poor.

A functioning SAO process gives marketing something they rarely get: a clear signal about which lead sources, which content types, and which ICPs are actually producing revenue-relevant pipeline. Not just which ones generate clicks or downloads, but which ones produce leads that sales accepts, follow up on, and converts. That signal allows a demand generation team to make real budget decisions rather than defensive ones.

What good looks like in 2026

The SAO conversation has become more complicated as buying journeys evolve with AI-driven sales transformation and longer decision cycles.

A single contact accepting a call is not the signal it once was. Increasingly, the SAO criteria that hold up in practice are account-level, not contact-level.

Is there evidence of multi-threaded engagement from the target account? Has someone with actual purchase influence been identified? Is there a timing signal, even a soft one, that suggests an active evaluation?

Organizations that have updated their SAO definition to reflect account-level signals rather than individual lead behavior tend to see better conversion rates downstream because the opportunities entering the pipeline represent accounts that are actually in motion rather than individuals who clicked something once.

None of this is complicated in principle.

A definition both teams trust. A calibration process that keeps it honest. Metrics that track what happens after acceptance, not just whether acceptance occurred.

The companies that have those three things in place treat the SAO as what it is meant to be: an actual signal about pipeline quality. The ones still arguing over the definition every quarter are measuring something; they are just not sure what it is.